The “Yin-Yang” grid, or “tennis ball” grid, is under consideration for use in future climate models.

Making a timely weather forecast, or running a high-resolution global climate model, requires the use of a massive supercomputer. Simulations that would take months to run on a normal computer take just hours on a supercomputer. However, supercomputers are far from magic. Programs have to be designed in very specific ways in order to make full use of a supercomputer’s capabilities. It is well-known that current weather and climate models will be incapable of making good use of future supercomputers. Next-generation models will need some drastic changes in order to make better use of the supercomputers that they will run on. This Snack explains what the problem with current models is and explains why it will be necessary to change the underlying grid that the model uses.

Supercomputers aren’t actually very super — each individual processing core is no better than those in the computer on your desk. However, the computer on your desk probably has just two or four cores. The Met Office’s computer has over 18,000 cores, and the top supercomputer in the world — at the time of writing, at least — has over three million! These processing cores do not grind away in isolation: they must talk to each other, many times each second, while the simulation is taking place. Processors do calculations at an incredible speed, but communication between processors is (in comparison) very slow.

This ‘inter-processor’ communication slows things down, and is actually the main bottleneck to running ever-larger simulations. Future models will need to reduce the amount of communication they require, compared to the models of today.

Where does this communication come from? To answer that, we need to know some basics about how these models work. At the heart of any atmospheric general circulation model is a component that reproduces the dynamics, or motion, of the atmosphere.

The dynamics component simulates several mathematical equations. These are written as partial differential equations, governing wind velocity, air pressure, air temperature, and so on. Within the model, these equations need to be approximated. This is where the grid comes in — the pressure, wind velocity, etc., are stored only at points on the grid.

A typical “latitude-longitude” grid. Image source: http://mitgcm.org/cubedsphere/latlongrid-40×20-whole.jpg

If the Earth were flat, it would be natural to use a rectangular grid of points. Instead, the grid normally used is a “latitude-longitude” grid, as shown to the left. This grid is perfect in almost every way. There is a regular structure, which allows the model to run faster. The gridboxes are quadrilaterals, which (for rather technical reasons) is good for accuracy. Finally, lines of latitude and longitude cross at right-angles, which is thought (by people in the know) to improve accuracy also.

However, the grid has one glaring problem. The lines of longitude all come together, at the poles. This means that, near the poles, there are lots and lots of gridpoints close together. To give a specific example, in the Met Office’s global model, the average grid spacing is 25km. However, the circle of gridpoints nearest the pole are just 70m apart. Why is this bad?

Remember that we’re trying to simulate some physical equations. In a small interval of time, what happens at one gridpoint only depends on events in a nearby region. On most of the Earth’s surface, this nearby region will only contain a few gridpoints, which is fine. But near the two poles, this nearby region can contain many, many other gridpoints, since the gridpoints are all so close together. Why does this become a problem for supercomputers?

When a simulation is started, each processing core gets assigned a small patch of gridpoints. Let’s look more closely at one of these patches, belonging to, say, Processor A. A gridpoint near the edge of this patch might be affected by some gridpoints on a different (but nearby) patch, say Processor B‘s. Processor A will have to ask Processor B about these gridpoints — for example, their pressures, temperatures and wind velocities — in order for Processor A to update the details of its own gridpoints. This has led to communication between different processors.

On most of the earth’s surface, this amount of communication is quite small, which is fine. But for a patch near the poles, the situation is much worse — there are lots gridpoints in a small area, so lots of communication needs to be done with other processors. Remember that too much inter-processor communication is like Kryptonite to a supercomputer! This “excessive” communication within the polar regions is already an annoyance, and will ultimately slow things to a crawl, dooming the latitude-longitude grid. This is despite the other advantages listed above.

In light of the this, a next-generation model will probably use an alternative to the latitude-longitude grid. The rest of the Snack provides a brief guide to the alternatives.

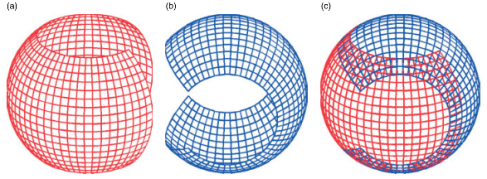

The most popular alternative is the “cubed-sphere” grid. Essentially, this is made by drawing a rectangular grid on each face of a cube, then “inflating” it into a sphere. This can be done in slightly different ways, and two variants are shown below. Like the latitude-longitude grid, these also have regular structure on each of the six ‘faces’, which allows the model to run faster, and the gridboxes are quadrilaterals, which (as mentioned before) is also good for accuracy.

Two variants of a cubed-sphere grid. Image taken from [1]

The first variant shown has gridlines crossing at right-angles, which is good for accuracy, but has closely-spaced points near the ‘vertices’ of the cube — a miniature version of the latitude-longitude grid problem. The second variant doesn’t suffer from this, but its gridlines don’t cross at right-angles, which may compromise accuracy slightly. One possible issue is that several points in the grid are “special” — the vertices of the cube are only at the corners of three surrounding cells, not four. This is bound to have some effect on the output of the model, but it is far too early to say what, or whether it even would be noticeable.

A more outlandish idea than the cubed-sphere is to use multiple, overlapping, sub-grids. Two nice examples are the “Yin-Yang” grid, and a “modified Yin-Yang” grid, shown below. Each sub-grid has a regular structure, is made up of quadrilaterals, and has grid lines crossing at right-angles, which is good news for speed and accuracy. However, information needs to be transferred between the grids using some kind of averaging, which will almost certainly compromise accuracy.

The Yin-Yang grid, formed of two partial latitude-longitude grids. Image from [1], originally provided by Abdessamad Qaddouri

A modified Yin-Yang grid, formed of three sub-grids. Image taken from [1], originally provided by Mohamed Zerroukat

Other shapes are much better for covering a sphere than quadrilaterals. For example, the figure below shows triangular and pentagonal-hexagonal grids. However, we have mentioned several times that quadrilateral cells provide an advantage in accuracy, and we would lose this by using a triangular or pentagonal-hexagonal grid.

A triangular grid, and the related grid formed of hexagons and (exactly 12) pentagons. Image taken from [1].

So, what is the best grid to use? Of course, this is the topic of much current research. Muddying the waters further, some of the above remarks assumed that the underlying equations were approximated in a particular way. Many current models are indeed in this category, but future models may not be. Ultimately, the grid is just one small part of a much bigger model, and should not be considered in isolation! As usual — more research is needed.

References

[1] Andrew Staniforth and John Thuburn. Horizontal grids for global weather and climate prediction models: a review. Quarterly Journal of the Royal Meteorological Society, 138(662): 1–26, 2012

Pingback: Forecasting the present | ClimateSnack()